TLDR We launched Podlettr - go check it out!

For nearly two years, me and my good friend Sérgio Fontes, an accomplished product designer, have been working on Podlettr - a great way to quickly catch up with your favourite podcasts. As the name implies, Podlettr is a letter from your podcasts. Reading is faster than listening, and with some AI magic, we convert hours worth of podcasts into beautiful, easy-to-read weekly newsletters.

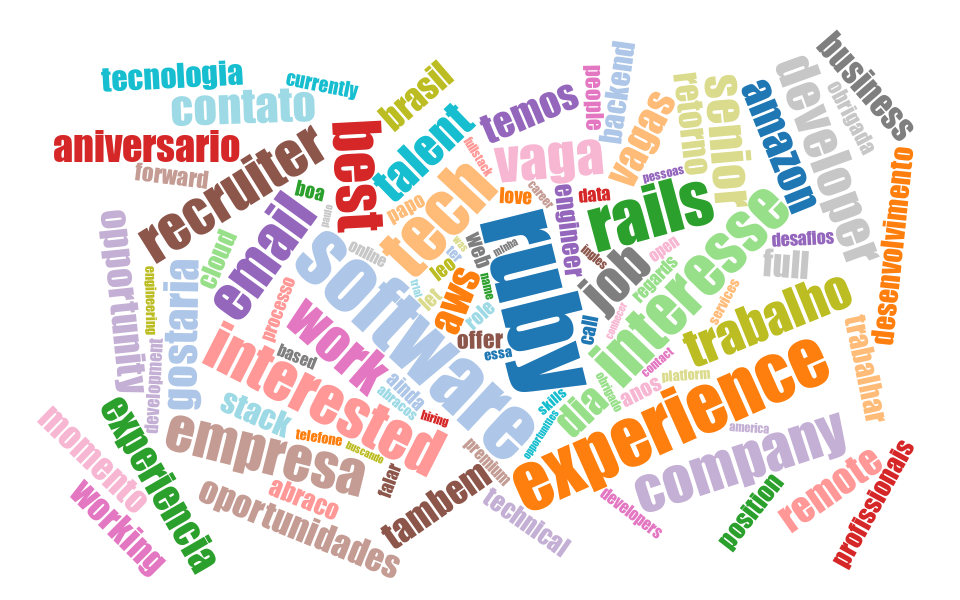

We both have demanding full-time jobs and family duties, so we had to be pragmatic with the frameworks and architecture choices we made. Rails was my obvious framework of choice. Within weeks of the initial idea, we had a working prototype.